Consider a field engineer standing in the concrete sub-basement of a commercial manufacturing facility, entirely cut off from cellular and wireless networks. She needs to extract specific electrical safety clauses from a dense, 500-page schematic manual using a mobile pdf editor, execute a complex diagnostic routine, and log the preliminary compliance data into the enterprise CRM system. She is equipped with a company-issued iPhone 11. Three floors above her, the site manager is monitoring incoming data streams on an iPhone 14 Pro, waiting for the system to synchronize. This environment represents the true operational test for modern artificial intelligence. It is not about what algorithms can achieve in pristine laboratory conditions; it is about what they can execute in the harsh, disconnected realities of daily commerce.

Edge-enabled enterprise AI is the practice of running optimized neural networks directly on local hardware to ensure uninterrupted computational processing, regardless of external network connectivity. In my six years working as an AI engineer, building architectures that function in the field, I have realized that utility is entirely dependent on this localized resilience. Enterprise technology leaders and operations directors—the primary architects of these digital ecosystems—are quickly realizing that raw processing power matters far less than accessibility. When we build systems for the field, we are not just writing code; we are engineering localized autonomy.

Hardware fragmentation creates the ultimate deployment test

One of the most persistent realities in enterprise technology is the mixed-hardware fleet. Organizations rarely upgrade every device simultaneously. A corporate ecosystem might easily contain entry-level legacy devices, mid-tier workhorses, and premium flagships all operating simultaneously. Building software that assumes maximum hardware capacity is a guaranteed path to operational failure.

When developing AI-powered mobile solutions, the architecture must scale elastically across this hardware spectrum. An older device, limited by its neural engine and thermal constraints, still needs to execute core machine learning tasks without draining the battery in twenty minutes. Conversely, when the application runs on newer hardware, such as the standard iPhone 14 or the larger-screened iPhone 14 Plus, it should intuitively tap into expanded memory bandwidth and faster unified memory architectures to process more complex local inferences. The software must dynamically assess the hardware it lives on and adjust its computational load accordingly.

This dynamic scaling is critical because the end-user workflow remains identical regardless of the device. The technician relies on their tools to function reliably. If a contract analysis tool requires cloud processing to summarize a document, and the technician loses connectivity, the application effectively ceases to exist. By pushing optimized, task-specific models directly to the edge, we ensure that fundamental tasks survive network degradation.

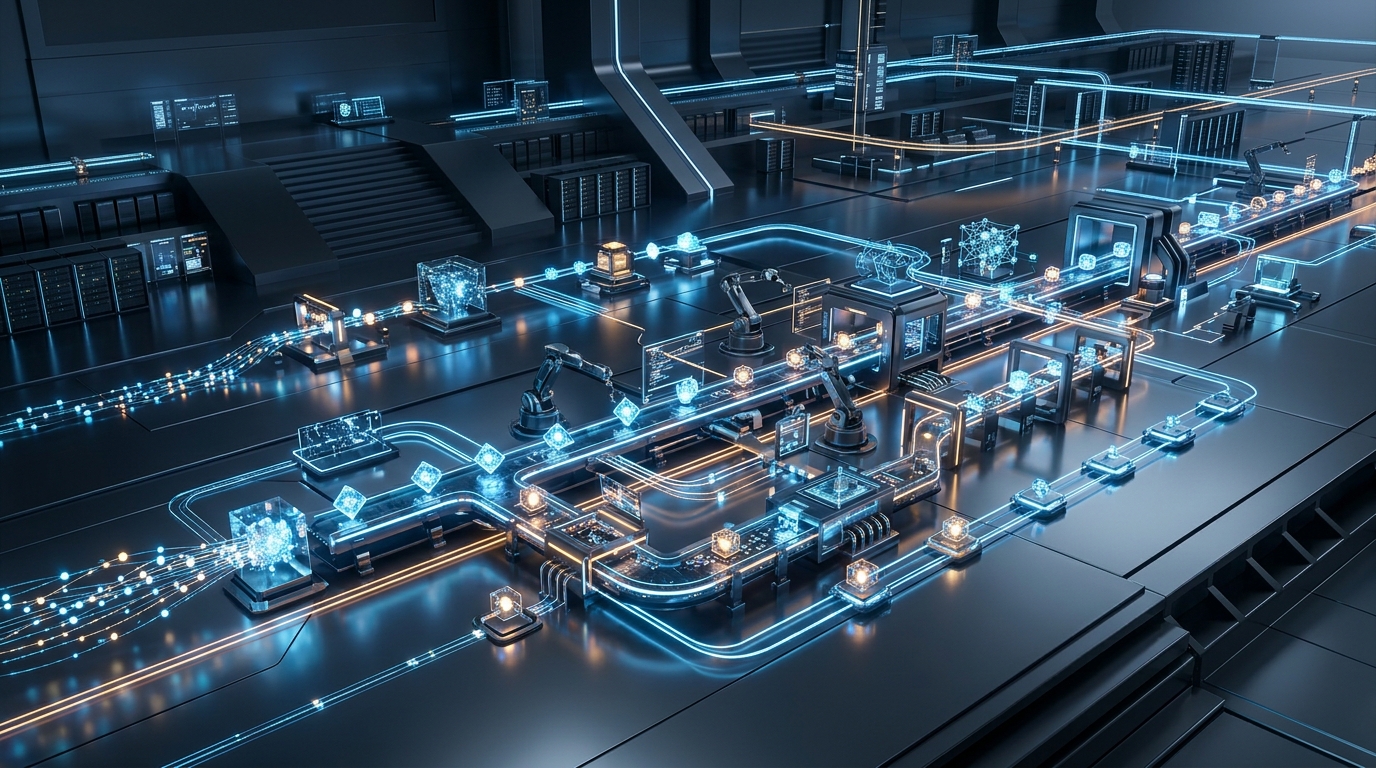

Organizations are constructing localized processing ecosystems

The industry is moving away from the assumption that all artificial intelligence must live in distant, centralized server farms. The logistical and financial overhead of transmitting every piece of enterprise data back and forth to a server is becoming unsustainable, especially as data volume multiplies. We are seeing a fundamental restructuring of how companies approach infrastructure.

A recent analysis published in the MIT Sloan Management Review highlighted this exact shift. Columnists Thomas H. Davenport and Randy Bean noted that rather than building massive data centers—a task generally left to vendors—companies that utilize these technologies are creating internal "AI factories." These factories are combinations of technology platforms, optimized methods, proprietary data, and previously developed algorithms that make it highly efficient to build and deploy intelligent systems at scale. By standardizing the pipeline, organizations can rapidly push optimized neural models out to the edge.

This localized infrastructure is driving massive economic shifts. According to recent market reports, the neural network software market expanded from $31.2 billion in 2023 to a projected $38.5 billion in 2024, driven largely by enterprise automation efforts and the proliferation of edge-capable software. As a firm operating deeply within this space, NeuralApps observes this trend firsthand. Enterprises are no longer satisfied with renting remote intelligence; they want to own and deploy localized intelligence that lives directly on their fleet devices.

Task-specific agents outmaneuver generalized systems

Building an AI factory requires making strict decisions about what models are actually necessary. Pushing a massive, generalized language model onto a phone is highly inefficient. Generalized models carry immense computational weight because they are trained to answer questions about everything from quantum physics to ancient history. A field technician does not need their device to write poetry; they need it to accurately categorize a maintenance record.

Instead, the industry is shifting toward highly specialized, autonomous agents. These are small, fiercely optimized neural networks trained to execute one very specific task exceptionally well. A recent Gartner projection stated that by the end of 2025, 40% of enterprise applications will feature embedded task-specific AI agents. Because these models are computationally lightweight, they can reside comfortably within the memory constraints of standard hardware, operating quietly in the background to resolve user friction without draining system resources.

When you combine these small models, you create a complex, responsive environment. One agent might handle optical character recognition for document scanning, while another routes the extracted data into the appropriate database fields. My colleague Umut Bayrak covered this topic in detail, exploring the technical mechanics of integrating efficient neural networks and localized agents into mobile hardware architectures. The goal is always to reduce the distance between the user's intent and the completed action.

A sustainable infrastructure requires strict product discipline

Transitioning from a traditional cloud-reliant architecture to an edge-first AI factory requires rigorous discipline in development. Every feature must justify its computational cost. It is easy to build applications that look impressive in a boardroom demonstration, but evaluating software requires looking at how it performs during a frantic, high-pressure shift on a rainy Tuesday afternoon.

When selecting or developing these platforms, technology leaders should apply a strict evaluation framework. First, determine the offline capability: what exact percentage of the application's core value proposition functions without an internet connection? Second, evaluate hardware elasticity: does the application gracefully degrade its processing intensity on older devices while maximizing the neural capacity of newer ones? Finally, assess workflow integration: does the intelligence naturally sit within the user's existing path, or does it require them to abandon their current screen to interact with a separate conversational interface?

As a software development firm specializing in these architectures, our philosophy at NeuralApps is rooted in this practical reality. We believe that innovative digital experiences are not defined by how complex their underlying algorithms are, but by how quietly they solve the user's problem. When we build systems that respect hardware limits, minimize cloud dependencies, and prioritize localized execution, we deliver software that actually works when it matters most.